In part 4 of this series, I talked about how to generate the cluster debug log for digging deeper on what caused the WSFC or the workloads running on top of it to go offline. I also walked you thru the process of getting started with reading the logs.

Analyzing the cluster debug log is more of a root cause analysis (RCA) activity rather than a troubleshooting activity. When you start analyzing the cluster debug log, you are now in the process of identifying why something happened and what caused it. Remember the goal of troubleshooting availability issues that I highlighted in part 1? Bring the failover cluster or any workload running on top of it as quickly as you possibly can.

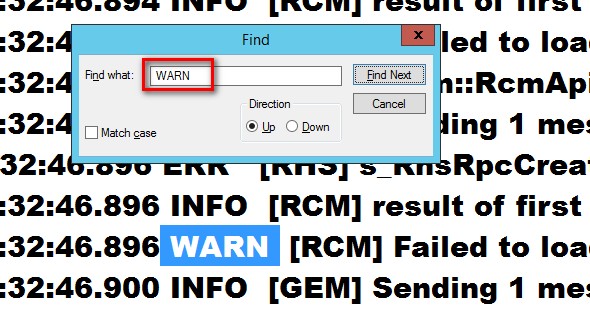

It’s easy to find the ERR and WARN keywords in the cluster debug log. Just use your favourite text editor to do the trick. The real challenge is interpreting the details of the event that occurred and making sense of them. This will be the focus of this blog post.

What does Code 89 stand for?

In part 1 of this blog series, I mentioned seeing Code 89 on the 2015 Chevy Cruze. If you’re not aware of what Code 89 means, you’ll probably end up scratching your head and figuring out what it is and why it appeared in the first place. The beauty of seeing Code 89 on the car dashboard is that I only see two things – the word “code” and the number 89.

Unfortunately, the cluster debug log entries are not as simple as that Code 89 display that I saw on the car dashboard. Let’s take a look at an entry in the cluster debug log, breaking the contents down to further understand what it means.

000012e4.00000674::2013/02/28-22:02:11.561 INFO [RES] Network Name <SQL Network Name (SQL01)>: The online thread is terminating before completing the operation: Registering network name resource SQL01 DNS records

Let’s start with this.

000012e4. This value before the first dot (.) represents the process ID – in hexaecimal notation – that is issuing the log entry. This process is typically the Resource Hosting System (RHS). We will explore the RHS in more detail in a later blog post. For now, we just need to know that the first few characters before the dot (.) represents the process ID.

00000674. This value after the first dot (.) represents the thread ID – in hexaecimal notation – that is issuing the log entry. You can trace thru what the thread is doing by searching for log entries that include this thread ID, similar to how I outlined in part 4 of this blog series. For example, if you start off with searching for the ERR keyword, you can grab the thread ID in the line where you saw the error and find every instance of the thread ID from top to bottom.

2013/02/28-22:02:11.561. This value represents the date and time (in GMT) when the error was generated. If the –UseLocalTime parameter was used in the Get-ClusterLog PowerShell cmdlet, this value will be using the local server’s time. Be cautious of this value, especially when you are dealing with multi-subnet, geographically dispersed clusters running SQL Server Availability Groups. You don’t want to get confused with what time was used to generate the error event.

INFO. This value represents the entry type. There are four (4) common types of entries – INFO (informational), WARN (warning), ERR (error) and DBG (debug). By default, you won’t see the DBG event entries unless you’ve configured the verbosity of the cluster debug log using the Set-ClusterLog PowerShell cmdlet. As I mentioned in part 4 of this series, I start with searching for ERR and WARN entries in the cluster debug log.

[RES] Network Name <SQL Network Name (SQL01)>. This value represents the cluster resource type together with the cluster resource name. Given that this shows a human-readable entry, it’s a lot easier for us to identify what this is. In this example, this cluster debug log entry is for the SQL Server cluster network name resource SQL01. This could be a virtual network name for your failover clustered instance (FCI) or the listener name for your Availability Group. Finding this specific cluster resource when looking at the Cluster Dependency Report or the Failover Cluster Manager is much easier when you have proper naming convention.

The online thread is terminating before completing the operation: Registering network name resource SQL01 DNS records. This describes what is going on with the clustered resource. Again, in human-readable format, it is easier to understand what this event is doing. From the log entry, we can see that the SQL Network Name (SQL01) cluster name resource could not be brought online because of some issues with DNS. Now, this could mean several different things – from issues with creating the virtual computer object in Active Directory to a corrupted DNS entry. What we do know is that it is an issue related to DNS record registration. Because this typically is outside of the DBA’s area of responsibility, we can simply escalate this to the Active Directory or DNS server administrators. (HINT: From my experience, this is commonly an Active Directory permissions issue so be sure to be in good terms with your AD administrators.)

Understanding the cluster debug log and making sense of the log entries can assist in writing better RCA reports. Knowing what caused the issue and what led to it can help us prevent it from happening again in the future.

But beyond writing better RCA reports, the cluster debug log is what Microsoft support engineers use to troubleshoot issues with failover clusters. When you open a support case with Microsoft, this is one of the things that they will ask for you to provide. In order to better assist them with the troubleshooting process and RCA, this needs to be available. I would highly recommend reading and analyzing some of your cluster debug logs to understand what is happening in your environment. You don’t need to wait for an issue to occur for you to start doing so. You just need to be familiar with how to read the cluster debug log so that you’ll be ready when a real issue actually happens.

Additional Resources

- Resource Hosting Subsystem (RHS) In Windows Server 2008 Failover Clusters (still applicable in Windows Server 2012 R2 and higher)

- Anatomy of a Cluster Log Entry (very old article from way back Windows 2000 but still very applicable)

- DBA 101: Collecting and Interpreting Failover Cluster Logs

Please note: I reserve the right to delete comments that are offensive or off-topic.